About Hadoop Spark

This provides a highly-available (HA) service on top of a cluster of machines, which is resistant to individual machine failure. It provides a flexible solution consisting of HDFS, MapReduce, and Spark that can process a wide variety of workloads.

Update the packages on Ubuntu using. Sudo apt-get update. After entering your password it will update some packages. Now you can install the JDK for Java installation. Sudo apt-get install default – jdk. Java version must be greater than 1.6 version. Step 1: Download spark tar ball from Apache spark official website. “Apache Spark has been installed successfully on Master, now deploy Spark on all the Slaves” iii. Install Spark On Slaves a. Setup Prerequisites on all the slaves. Run following steps on all the slaves (or worker nodes): “1.1. Add Entries in hosts file” “1.2. Install Java 7” “1.3. Install Scala” b. Copy setups from master to all the slaves.

Hadoop is designed to scale to thousands of servers, each offering local computation and storage capacity, and is able to detect and handle failures at the application layer.

Learn more about this bundle.

In this tutorial you’ll learn how to…

- Get your Hadoop Spark cluster up and running, using JAAS

- Operate your new cluster.

- Create your first big data workload.

- Change the execution mode of Spark in your cluster.

You will need…

- An Ubuntu One account (you can set it up in the deployment process)

- A public SSH key.

- Credentials for AWS, GCE or Azure

- Apache Spark Tutorial

- Apache Spark Useful Resources

- Selected Reading

Spark is Hadoop’s sub-project. Therefore, it is better to install Spark into a Linux based system. The following steps show how to install Apache Spark.

Step 1: Verifying Java Installation

Java installation is one of the mandatory things in installing Spark. Try the following command to verify the JAVA version.

If Java is already, installed on your system, you get to see the following response −

In case you do not have Java installed on your system, then Install Java before proceeding to next step.

Step 2: Verifying Scala installation

You should Scala language to implement Spark. So let us verify Scala installation using following command.

If Scala is already installed on your system, you get to see the following response −

In case you don’t have Scala installed on your system, then proceed to next step for Scala installation.

Step 3: Downloading Scala

Download the latest version of Scala by visit the following link Download Scala. For this tutorial, we are using scala-2.11.6 version. After downloading, you will find the Scala tar file in the download folder.

Step 4: Installing Scala

Follow the below given steps for installing Scala.

Extract the Scala tar file

Type the following command for extracting the Scala tar file.

Move Scala software files

Use the following commands for moving the Scala software files, to respective directory (/usr/local/scala).

Set PATH for Scala

Use the following command for setting PATH for Scala.

Verifying Scala Installation

After installation, it is better to verify it. Use the following command for verifying Scala installation.

If Scala is already installed on your system, you get to see the following response −

Step 5: Downloading Apache Spark

Download the latest version of Spark by visiting the following link Download Spark. For this tutorial, we are using spark-1.3.1-bin-hadoop2.6 version. After downloading it, you will find the Spark tar file in the download folder.

Step 6: Installing Spark

Follow the steps given below for installing Spark.

Extracting Spark tar

The following command for extracting the spark tar file.

Moving Spark software files

The following commands for moving the Spark software files to respective directory (/usr/local/spark).

Setting up the environment for Spark

Add the following line to ~/.bashrc file. It means adding the location, where the spark software file are located to the PATH variable.

Use the following command for sourcing the ~/.bashrc file.

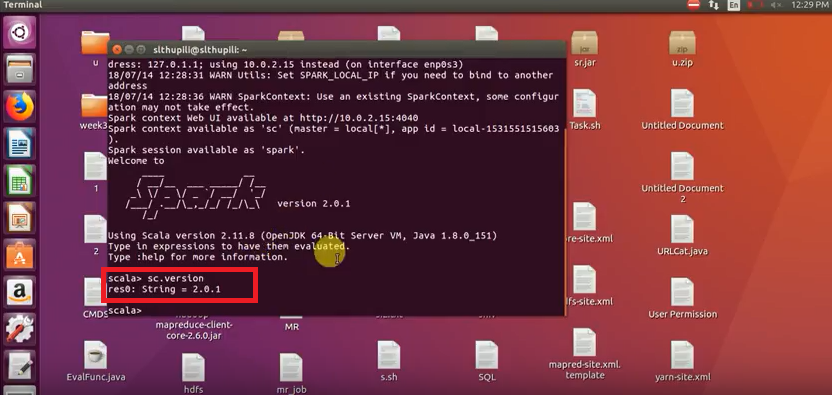

Step 7: Verifying the Spark Installation

Write the following command for opening Spark shell.

If spark is installed successfully then you will find the following output.